How to use AI in hiring for fair, efficient recruitment

TL;DR:

- AI automates high-volume recruitment tasks but requires strict compliance and human oversight in Europe.

- A hybrid human-AI approach is most effective for trustworthy, nuanced hiring outcomes.

- Continuous bias monitoring and clear candidate communication are essential for responsible AI deployment.

HR teams across the Netherlands, UK, and Spain are drowning in applications while being held to higher standards of fairness than ever before. Candidate volumes are up, time-to-hire pressure is relentless, and regulators are watching closely. AI promises to cut through the noise, but it also brings real risks around bias, compliance, and candidate trust. This guide gives you a clear, practical roadmap: what AI actually does in hiring, what you need before you start, how to implement it step by step, and how to keep it working well for the long term.

Table of Contents

- Understanding AI in the hiring process

- What you need before deploying AI in hiring

- Step-by-step: Implementing AI into your hiring process

- Avoiding pitfalls: Monitoring and bias management

- Measuring results and ensuring continuous improvement

- Why hybrid human-AI hiring is the proven formula

- Next steps: Upgrading your hiring with AI-driven solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI hires are highly regulated | Strict rules in the EU and existing UK laws require transparency, bias checks, and ongoing oversight for all AI recruitment tools. |

| Preparation is essential | HR teams must complete compliance reviews and pilots before rolling out AI in hiring. |

| Hybrid models work best | Combining human decision-making with AI reduces risk and improves quality of hire. |

| Monitor and improve continuously | Regular audits and diversity checks ensure AI delivers on fairness, speed, and candidate experience. |

Understanding AI in the hiring process

Let us start with what AI actually does, because “AI in hiring” covers a wide range of tools and not all of them carry the same risks or rewards.

At its core, AI in recruitment handles tasks that used to eat up enormous amounts of recruiter time. Think resume screening with AI, chatbot-driven candidate engagement, video interview analysis, and predictive matching that pulls signals from your applicant tracking system (ATS). These tools can process thousands of applications in the time it would take a human to review fifty. That is genuinely exciting for any team managing high-volume roles.

But here is where it gets important. Not all AI hiring tools are treated equally by regulators.

Key AI capabilities in recruitment:

- Resume and CV screening using skills-based matching

- Chatbots for initial candidate engagement and FAQ responses

- Video interview analysis assessing language, tone, and structure

- Predictive matching based on historical ATS data

- Automated scheduling and interview coordination

- Cognitive and behavioural assessments

Under the EU AI Act, recruitment AI is high-risk, meaning tools used for resume screening, candidate evaluation, and job ad targeting in the Netherlands and Spain require rigorous testing, human oversight, transparency, bias audits, and worker notification before deployment. This is not optional guidance. It is law, with full obligations kicking in from August 2026.

The UK takes a different approach. UK employers have no specific AI hiring laws but must comply with the Equality Act 2010 and UK GDPR, with ICO and government guidance emphasising transparency, fairness, and human oversight. Less prescriptive, but not a free pass.

| Region | Regulatory framework | Key obligations |

|---|---|---|

| Netherlands | EU AI Act (high-risk) | Human oversight, bias audits, worker notification, CE marking |

| Spain | EU AI Act (high-risk) | Same as Netherlands, full compliance by Aug 2026 |

| UK | Equality Act 2010, UK GDPR | Fairness, transparency, human review, ICO guidance |

For a broader view of where AI in recruitment 2026 is heading, the regulatory picture is only going to get more detailed, not less.

Pro Tip: Document every AI assessment your system produces and store those records in a format that can be retrieved quickly for audits. Regulators in the EU can and will ask for them.

What you need before deploying AI in hiring

Before you switch anything on, you need a solid foundation. Rushing into AI deployment without the right groundwork is one of the most common and costly mistakes HR teams make.

Think of it like building a house. You would not start with the roof. You need the foundations first: a compliance assessment, a clear inventory of any AI tools already in use, and thorough vendor due diligence. This is especially true in regulated markets.

Steps to take before launching any AI hiring tool:

- Conduct a full compliance assessment against the EU AI Act (Netherlands and Spain) or UK GDPR and Equality Act (UK)

- Create an inventory of every AI tool currently used in your hiring process

- Run vendor due diligence: ask suppliers for bias testing results, data governance policies, and audit trails

- Establish a human oversight protocol for every AI-assisted decision point

- Brief workers and their representatives about planned AI use, as required under EU rules

- Define clear, measurable KPIs for any pilot programme

- Set up a candidate communication plan that discloses AI use upfront

The EU AI Act obligations for high-risk systems from August 2026 include mandatory human oversight, informing workers and their representatives, bias testing, and CE marking for providers. This means your vendor must also be compliant, not just your internal process.

| Requirement | Netherlands and Spain (EU AI Act) | UK |

|---|---|---|

| Human oversight at decision points | Mandatory | Strongly recommended |

| Worker notification before deployment | Mandatory | Good practice |

| Bias and equality testing | Mandatory | Required under Equality Act |

| Candidate transparency | Mandatory | Required under UK GDPR |

| CE marking for AI providers | Mandatory for providers | Not applicable |

| Audit trail and documentation | Mandatory | Required under UK GDPR |

Understanding AI oversight in recruitment is not just a compliance exercise. It is also the foundation for reducing bias with AI in a way that actually holds up under scrutiny.

Pro Tip: Always pilot new AI tools with clear, measurable KPIs before widescale rollout. A pilot with 50 candidates tells you far more than a vendor’s brochure ever will.

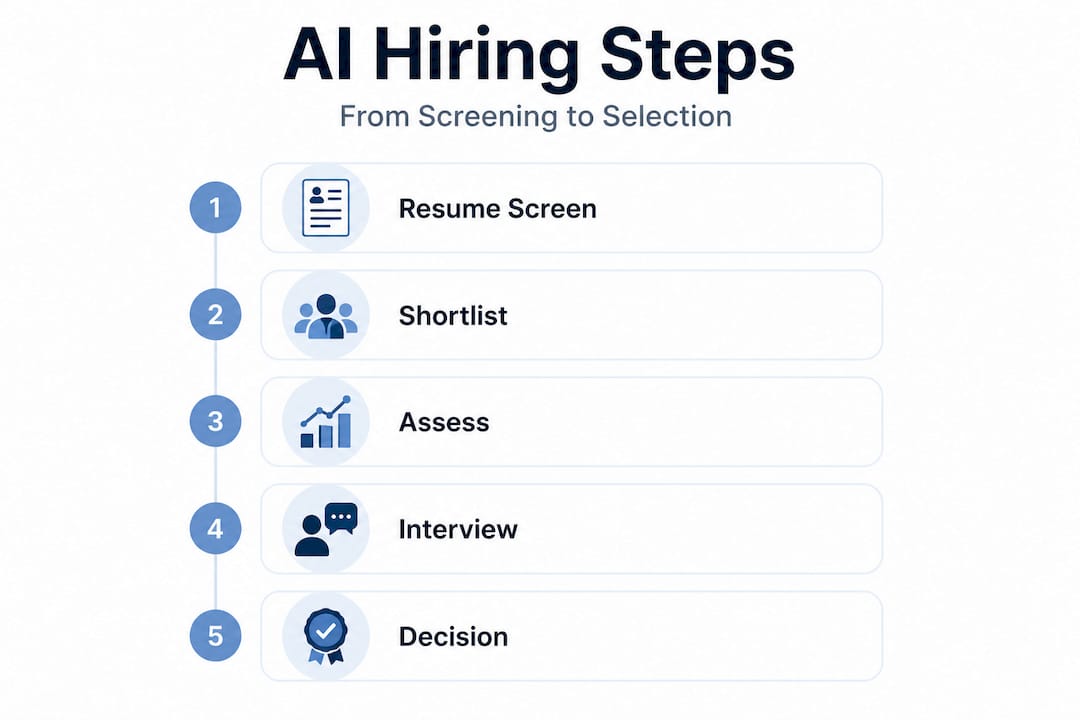

Step-by-step: Implementing AI into your hiring process

Right, you have done the groundwork. Now let us get into the actual implementation. This is where many HR teams either get it right and see real gains, or stumble by skipping steps that matter.

Key methodologies for AI in hiring include automated resume screening using skills matching beyond keywords, AI chatbots for initial engagement and scheduling, video interview analysis, predictive matching from ATS data, and structured assessments with human-in-the-loop review. Each of these has a place in a well-designed hiring workflow.

Step-by-step implementation guide:

- Select your tools based on compliance readiness, bias testing evidence, and ATS compatibility

- Map your hiring workflow and identify which stages benefit most from automation

- Integrate with your ATS to ensure data flows cleanly and audit trails are maintained

- Configure screening criteria using skills-based parameters rather than keyword matching alone

- Set up chatbot engagement for initial candidate contact and FAQ handling

- Pilot AI video interviews with a defined cohort and compare outputs against human reviewer scores

- Review and calibrate the system based on pilot data before scaling

- Train your HR team on how to interpret AI outputs and where human judgement takes over

High-impact tasks where AI adds the most value:

- High-volume CV screening and shortlisting

- Automated scheduling and interview coordination

- Structured video pitch analysis

- Cognitive and behavioural assessments

- Candidate ranking based on skills match

Practical steps for responsible AI hiring include rigorous pre- and ongoing bias testing, maintaining human oversight at decision points, ensuring transparency by informing candidates of AI use, piloting tools with KPIs before scaling, and integrating deeply with your ATS for end-to-end workflows.

| Approach | Best for | Human involvement |

|---|---|---|

| Fully automated screening | High-volume, entry-level roles | Review at shortlist stage |

| Hybrid AI and human review | Mid-level and specialist roles | Review at each stage |

| AI-assisted structured interviews | All levels | Human conducts final interview |

| Predictive matching | Internal mobility and succession | Human validates all recommendations |

The data on AI assessments for faster hiring is compelling, and the AI interview benefits extend well beyond speed alone. Consistency, structured scoring, and reduced unconscious bias are all genuine wins when the system is set up properly.

Document every workflow adjustment you make. If a regulator or candidate challenges a decision, your documentation is your defence.

Avoiding pitfalls: Monitoring and bias management

Here is where we need to be honest. AI in hiring is not a set-and-forget solution. Left unmonitored, it can create problems that are harder to fix than the ones it solved.

The most common pitfalls HR teams encounter are applicant drop-off at AI-gated stages, hidden bias baked into training data, and regulatory breaches from inadequate documentation or transparency.

Best practices for monitoring and bias management:

- Run bias audits at least quarterly, checking outcomes by gender, age, ethnicity, and disability status

- Review candidate drop-off rates at each AI-gated stage and investigate spikes

- Offer candidates a clear way to appeal or request human review of AI decisions

- Regularly update training data to reflect your current workforce and target candidate profile

- Compare AI scores against human reviewer scores on the same candidates to check alignment

- Monitor recruitment outcomes with AI interviews over time, not just at launch

Important: Asynchronous AI interviews deter over 50% of applicants, particularly women, due to perceived unfairness. AI systems can also inherit training data biases or show positional bias, favouring the first resume reviewed. Ongoing monitoring is essential because even human raters are inconsistent, and AI sometimes scores underrepresented groups differently than humans do.

This is a nuanced picture. AI can reduce some forms of bias while amplifying others. The hybrid human-AI model is consistently recommended because pure automation lacks the nuance needed for soft skills assessment and can worsen candidate experience if the process feels opaque or unfair.

Understanding AI interviews in HR means accepting both the promise and the responsibility. The tools are powerful. The governance around them is what separates good hiring from risky hiring.

Pro Tip: Regularly update your AI training data and always give candidates a clear, accessible route to appeal an AI-driven decision. This is both good ethics and, in the EU, a legal requirement.

Measuring results and ensuring continuous improvement

You have implemented your tools, you are monitoring for bias, and your team is trained. Now comes the part that separates good AI hiring programmes from great ones: measuring what is actually working.

Using AI for high-volume screening and scheduling first, then integrating with your ATS, allows you to measure ROI through time and cost savings, diversity pass-through rates, and candidate experience scores. Disclosing AI use early and offering human appeals also reduces applicant deterrence significantly.

Suggested KPIs and how to track them:

- Time to hire: Measure from job posting to offer acceptance, before and after AI implementation

- Cost per hire: Track recruiter hours and advertising spend against number of hires

- Diversity pass-through rate: Monitor the proportion of underrepresented candidates at each stage

- Candidate satisfaction score: Use post-process surveys to gauge experience quality

- Offer acceptance rate: A drop here can signal candidate experience issues

- Recruiter time saved: Hours freed up by automation, redirected to high-value tasks

| Metric | Typical before AI | Typical after AI | Notes |

|---|---|---|---|

| Time to hire | 35 to 45 days | 18 to 25 days | Varies by role complexity |

| Cost per hire | £3,500 to £5,000 | £1,800 to £2,800 | Savings from reduced recruiter hours |

| Diversity pass-through | 25 to 35% | 35 to 50% | Depends on bias audit quality |

| Candidate satisfaction | 60 to 70% positive | 70 to 85% positive | Improves with transparency |

Continuous improvement means building feedback loops. Review your KPIs monthly in the first six months, then quarterly. Hold regular calibration sessions where your HR team compares AI outputs against their own assessments. Check the benefits of AI assessment against your actual data, not just industry benchmarks.

Audits should be scheduled, not reactive. Build them into your annual HR calendar and treat them as seriously as financial audits.

Why hybrid human-AI hiring is the proven formula

We have seen a lot of enthusiasm for full automation in hiring, and we understand the appeal. The idea of removing human bias entirely by handing decisions to an algorithm sounds logical. But in practice, it rarely works that way.

Automation is genuinely brilliant at handling volume. It can screen five thousand applications overnight and flag the top two hundred based on skills match. That is a real win. But the moment you need to assess cultural fit, communication style, resilience, or potential, pure automation starts to fall short. These are deeply human judgements, and they matter enormously for long-term hire quality.

The role of human decision-makers in an AI-assisted process is not just a regulatory checkbox. It is the thing that makes the whole system trustworthy. Candidates know when they are being processed by a machine with no human in the loop, and it affects how they feel about your employer brand. In competitive talent markets, that feeling matters.

What we have seen consistently is that hybrid models outperform both pure AI and all-human processes. They are faster than all-human hiring, more consistent than all-human scoring, and more nuanced than full automation. They also hold up better under regulatory scrutiny because there is always a human accountable for the final decision.

The uncomfortable truth is that AI in hiring is only as good as the humans who design, monitor, and govern it. The technology is a tool. The wisdom, accountability, and judgement still sit with your team.

Pro Tip: Schedule periodic reviews where your team compares AI-generated scores against their own independent assessments of the same candidates. Gaps in alignment are your most valuable source of insight for improving both the AI and your human reviewers.

Next steps: Upgrading your hiring with AI-driven solutions

If you are ready to move beyond CV screening and towards something that actually predicts performance, we are over the moon to help. Real assessments, not paper credentials, are the foundation of smarter hiring.

At We Are Over The Moon, we have built a skills-matching platform that replaces outdated CV reviews with AI interviews, company challenges, cultural matching, cognitive tests, and video pitches. Every tool is designed for compliant, bias-resistant hiring in markets like the Netherlands, UK, and Spain. If you want to see how AI candidate validation works in practice, or if you want to talk through your specific hiring challenges, the Over The Moon team is ready to help you build a hiring process you can be genuinely proud of.

Frequently asked questions

Is using AI for hiring legal in the Netherlands, Spain, and the UK?

Yes, but the rules differ significantly. In the Netherlands and Spain, EU AI Act high-risk obligations apply from August 2026, requiring human oversight, bias audits, and worker notification. In the UK, anti-discrimination and data protection laws still apply firmly, even without specific AI legislation.

How do I avoid bias when using AI in recruitment?

Rigorous bias testing before and during deployment is essential, alongside keeping human reviewers at key decision points and giving candidates a clear route to appeal AI-driven outcomes.

What is the biggest risk of using AI in hiring?

Asynchronous AI interviews deter over 50% of applicants, particularly women, and unchecked training data bias can quietly disadvantage underrepresented groups without anyone noticing until the damage is done.

How can I prove my AI hiring tools improve diversity and efficiency?

Track your time to hire, diversity rates, and candidate satisfaction before and after implementation. The numbers will tell you clearly whether your tools are delivering or need recalibrating.

Recommended

- Benefits of AI Assessment: Boosting Recruitment Quality | We Are Over The Moon

- Advantages of replacing CVs: 74% faster hiring with AI assessments | We Are Over The Moon

- How AI reduces bias in recruitment for HR leaders | We Are Over The Moon

- AI in Recruitment – Transforming Candidate Screening and Fit | We Are Over The Moon