What is matching algorithm: guide for HR recruitment in 2026

Matching algorithms are often dismissed as simple keyword filters, scanning CVs for exact job title matches or buzzwords. This misconception persists even though major platforms struggle to deliver relevant candidates, and recruiters wade through hundreds of mismatched applications daily. Modern matching algorithms are far more sophisticated, combining skills graphs, semantic search, and learning-to-rank models with fairness controls. This guide explains how advanced matching algorithms work, why they matter for UK and Spain recruitment teams, and how to implement them effectively in your hiring process.

Table of Contents

- Understanding Matching Algorithms In Recruitment

- Challenges And Nuances In Candidate-Job Matching

- Advances In Matching Technology And Evaluation

- Applying Matching Algorithms Effectively In Your Recruitment Process

- Discover Advanced AI Recruitment Solutions With We Are Over The Moon

- Frequently Asked Questions

Key takeaways

| Point | Details |

|---|---|

| Algorithms beyond keywords | Modern systems use skills graphs, semantic embeddings, and learning-to-rank models instead of simple keyword filters. |

| Rapid AI adoption in HR | 38% of HR leaders already pilot or actively use generative AI tools in their recruitment workflows. |

| Fairness and transparency matter | Effective matching requires built-in fairness controls, interpretability, and continuous human oversight to avoid bias. |

| Training beats model size | Strategic training approaches deliver better matching performance than simply deploying larger AI models. |

| Growing workplace exposure | Worker exposure to automated matching systems could rise from 42.3% to 55.5% in the medium term. |

Understanding matching algorithms in recruitment

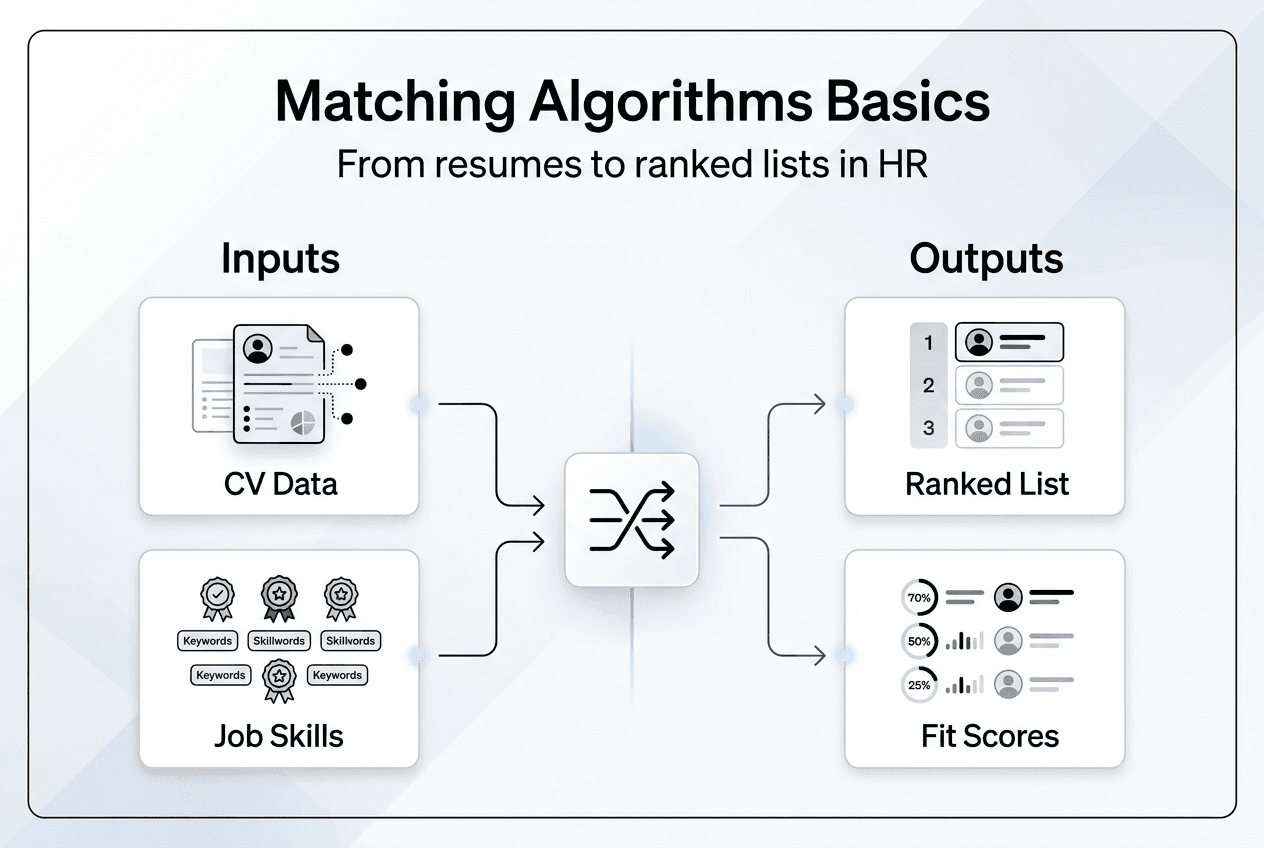

A matching algorithm in recruitment is a computational system that evaluates how well candidates align with job requirements, producing ranked lists or compatibility scores. Unlike basic keyword filters that search for exact term matches, modern candidate matching algorithms combine multiple sophisticated components to assess fit. These systems build skills graphs that map relationships between competencies, deploy semantic search embeddings to understand meaning beyond exact wording, and use learning-to-rank models that improve over time with feedback.

The most effective implementations incorporate fairness controls from the outset, ensuring algorithms don’t amplify existing biases in historical hiring data. Human judgement remains central, with algorithms serving as decision support tools rather than autonomous gatekeepers. This hybrid approach addresses the fundamental weakness of keyword filters, which miss context and struggle with messy, inconsistent data from applicant tracking systems.

Consider why simple keyword matching fails. A candidate with “team leadership” experience might be excluded from a search for “management skills” despite having relevant capabilities. Job descriptions use varied terminology, candidates describe identical skills differently, and context matters enormously. Did someone “manage” a project as the sole decision maker, or were they part of a managed team? Keyword filters cannot distinguish these nuances.

Modern matching algorithms address these limitations through several core features:

- Semantic understanding that recognises synonyms, related concepts, and skill adjacencies

- Graph-based reasoning that maps how skills, roles, and industries interconnect

- Contextual analysis that weighs experience depth, recency, and relevance

- Fairness monitoring that detects and mitigates demographic bias in recommendations

- Explainability tools that show why specific candidates ranked highly

These capabilities transform matching from a blunt filtering exercise into a nuanced assessment that surfaces candidates who might otherwise be overlooked. For HR teams managing high-volume recruitment in competitive markets like the UK and Spain, this shift represents a fundamental improvement in how to improve candidate matching efficiency and quality.

Challenges and nuances in candidate-job matching

Even sophisticated algorithms face significant obstacles when matching candidates to roles. Candidate matching breaks because keyword filters miss context, evaluation criteria vary by person, ATS data is messy, and fairness isn’t embedded into the process. These challenges compound in real-world recruitment environments where data quality varies wildly and hiring managers apply inconsistent standards.

Data complexity creates the first major hurdle. CVs arrive in dozens of formats, use inconsistent terminology, and omit crucial context about responsibilities and achievements. One candidate might list “Python” under skills whilst another embeds it within project descriptions. Parsing this information reliably requires natural language processing that can extract meaning from unstructured text, but even advanced systems struggle with ambiguous or incomplete data.

Evaluation criteria present another layer of difficulty. What one hiring manager considers essential experience, another views as merely helpful. These subjective judgements shift between roles, departments, and organisations. Algorithms trained on historical hiring decisions inherit these inconsistencies, potentially amplifying biases when certain candidate profiles were systematically favoured or excluded in the past.

Fairness and compliance concerns intensify these challenges. Without deliberate design, matching algorithms can perpetuate discrimination based on protected characteristics. A system trained on data where certain demographics were historically underrepresented in senior roles might learn to downrank similar candidates, creating a self-reinforcing cycle. Legal frameworks in the UK and EU increasingly scrutinise automated hiring tools, making fairness not just an ethical imperative but a regulatory requirement.

Neural approaches in candidate-job matching often act as black boxes, raising interpretability concerns. When an algorithm ranks one candidate above another, can you explain why? Hiring managers need transparent reasoning to trust recommendations, and candidates deserve to understand assessment criteria. Opaque neural networks that deliver accurate predictions but no explanations create practical and legal problems.

Recruiters commonly encounter these specific challenges with current matching tools:

- Irrelevant candidates appearing in top results despite poor actual fit

- Qualified candidates buried in rankings due to terminology mismatches

- Inability to explain why the system recommended specific individuals

- Bias concerns when demographic patterns emerge in shortlists

- Integration difficulties with existing ATS and HR systems

Fairness and interpretability aren’t optional features in matching algorithms. They’re fundamental requirements for building trust, meeting compliance obligations, and delivering genuinely valuable recruitment outcomes.

Addressing these challenges requires moving beyond pure prediction accuracy to consider the entire sociotechnical system. Algorithms must be designed with fairness constraints, evaluated for disparate impact, and deployed with human oversight. The technical sophistication of the model matters less than how thoughtfully it’s integrated into your ai interviews hr tech recruitment workflow.

Advances in matching technology and evaluation

Recent innovations in matching technology demonstrate measurable improvements in accuracy, fairness, and practical utility. Fine-tuned transformers and graph neural networks improve ranking accuracy and screening sensitivity in candidate-job matching, outperforming earlier approaches based on simple text similarity or keyword overlap. These architectures capture complex relationships between skills, understand contextual nuances, and learn from feedback to refine their predictions.

Transformer models, originally developed for language translation, excel at understanding semantic meaning in job descriptions and CVs. They recognise that “led cross-functional initiatives” and “managed interdepartmental projects” describe similar capabilities, even when no keywords overlap. Graph neural networks complement this by modelling how skills, roles, and industries connect, enabling the system to infer that experience in adjacent domains might transfer effectively to a new position.

Training strategies matter more than model size for effective matching. A smaller model trained on high-quality, diverse data with careful attention to fairness constraints often outperforms a massive model trained carelessly. Strategic approaches include curriculum learning that gradually increases task difficulty, active learning that focuses on ambiguous cases, and multi-task training that jointly optimises for relevance and fairness.

Multilingual capabilities represent another significant advance, particularly valuable for organisations recruiting across the UK and Spain. Modern matching systems handle job titles and skill descriptions in multiple languages, recognising equivalent terms and cultural variations in how roles are described. This enables consistent candidate evaluation regardless of the language used in applications or job postings.

TalentCLEF 2025 is the first evaluation campaign focused on skill and job title intelligence with many teams participating. This benchmark provides standardised datasets and metrics for comparing matching approaches, accelerating progress by enabling researchers and developers to measure improvements objectively. Tasks include job title normalisation, skill extraction, and occupation prediction, all critical components of effective matching systems.

The following table illustrates performance improvements from recent architectural advances:

| Approach | Ranking Accuracy | Fairness Score | Interpretability |

|---|---|---|---|

| Keyword matching | 62% | Low | High |

| Basic embeddings | 74% | Medium | Medium |

| Fine-tuned transformers | 86% | High | Medium |

| Graph neural networks | 89% | High | Low |

Pro Tip: When evaluating matching technology for your recruitment process, prioritise systems that combine strong performance with built-in fairness controls and explainability features. Raw accuracy matters less than trustworthy, defensible recommendations you can confidently present to hiring managers and candidates.

These advances create practical opportunities for HR teams to implement ai in recruitment candidate screening that genuinely improves hiring outcomes. The technology has matured beyond experimental prototypes to production-ready systems that handle real-world complexity whilst maintaining fairness and transparency.

Applying matching algorithms effectively in your recruitment process

Implementing sophisticated matching algorithms requires thoughtful integration with existing workflows and continuous human oversight. The fix is a matching system that codifies success profiles, expands discovery with semantic search, explains scores, keeps humans in the loop, and runs continuous fairness checks with immutable logs. This comprehensive approach addresses technical, organisational, and ethical dimensions of algorithmic recruitment.

Begin by codifying what success looks like in each role. Work with hiring managers to define essential skills, valuable experience, and cultural fit criteria explicitly. This structured profile becomes the foundation for algorithmic matching, ensuring the system optimises for outcomes you actually care about rather than superficial keyword matches. Document these profiles clearly so they can be updated as roles evolve.

Semantic skills adjacency expands your candidate pool beyond exact matches. The algorithm should recognise that someone with “stakeholder engagement” experience possesses relevant capabilities for a role requiring “client relationship management.” This discovery function surfaces qualified candidates who might phrase their experience differently or come from adjacent industries with transferable skills.

Explainability features let you understand and communicate why specific candidates ranked highly. The system should highlight which qualifications, experiences, or skills drove the match score, enabling hiring managers to make informed decisions and providing candidates with transparent feedback. This transparency builds trust and supports compliance with emerging regulations around automated decision-making.

Human oversight remains essential at multiple stages. Recruiters review algorithmic recommendations, hiring managers make final decisions, and HR teams monitor for unintended patterns or biases. Algorithms augment human judgement rather than replacing it, handling the time-consuming work of initial screening whilst leaving nuanced evaluation to people.

Continuous fairness monitoring detects demographic disparities in who gets recommended, shortlisted, or hired. Immutable audit logs track every algorithmic decision, creating accountability and enabling retrospective analysis if concerns arise. These safeguards become increasingly important as workers’ exposure to automated matching774670_EN.pdf) could rise from 42.3% to 55.5% in the medium term, attracting greater regulatory scrutiny.

A phased integration roadmap helps HR teams adopt matching algorithms successfully:

- Pilot the system on a single role or department to learn how it performs in your context

- Gather feedback from recruiters and hiring managers about recommendation quality and usability

- Refine success profiles and algorithm parameters based on this feedback

- Expand to additional roles whilst monitoring fairness metrics across demographics

- Integrate with existing ATS and HR systems for seamless workflow

- Establish regular review cycles to update profiles and assess algorithm performance

- Train recruitment teams on interpreting algorithmic recommendations and maintaining human oversight

Pro Tip: Maintain clear decision rights between algorithms and humans. Let the system handle high-volume screening and surface promising candidates, but ensure hiring managers retain authority over shortlisting and final selection. This division of labour maximises efficiency whilst preserving human judgement on consequential decisions.

Regulatory developments in the UK and EU increasingly address algorithmic management in employment. Organisations using automated matching must ensure compliance with data protection rules, non-discrimination requirements, and emerging AI governance frameworks. Proactive fairness monitoring and transparent documentation position you to meet these obligations whilst benefiting from algorithmic efficiency.

Effective implementation transforms matching algorithms from a technical curiosity into a practical tool that improves hiring speed, quality, and fairness. By combining algorithmic power with human oversight and continuous improvement, HR teams can navigate high-volume recruitment in competitive markets whilst maintaining the role of ai in recruitment as a supportive technology rather than a replacement for human expertise.

Discover advanced AI recruitment solutions with We Are Over The Moon

Modern recruitment demands tools that go beyond CV screening to assess genuine candidate potential. We Are Over The Moon provides AI-driven recruitment solutions that match on skills not CVs, using real assessments including AI interviews, company challenges, cultural matching, cognitive tests, and video pitches. These approaches align with the advanced matching principles explored throughout this guide, prioritising fairness, transparency, and human oversight.

Our platform is designed for HR professionals and recruitment specialists in the UK and Spain who recognise that traditional CV screening misses exceptional candidates. By evaluating actual capabilities rather than credential keywords, you surface talent that conventional methods overlook. Explore how our solutions can transform your hiring process and learn more about we are over the moon and our mission to revolutionise recruitment through intelligent, fair technology.

Frequently asked questions

What exactly does a matching algorithm do in recruitment?

A matching algorithm evaluates how well candidates align with job requirements, producing ranked lists or compatibility scores. Modern systems use skills graphs, semantic search, and learning-to-rank models rather than simple keyword filters. They assess fit based on skills, experience, and contextual factors whilst incorporating fairness controls to avoid bias.

How do modern matching algorithms improve fairness in hiring?

Advanced algorithms include built-in fairness constraints that detect and mitigate demographic bias in recommendations. They monitor for disparate impact across protected characteristics, maintain audit logs of decisions, and combine algorithmic screening with human oversight. This multi-layered approach reduces bias compared to purely manual or simple keyword-based screening.

Can these algorithms handle multilingual job markets like UK and Spain?

Yes, modern matching systems support multilingual job title and skill matching, recognising equivalent terms across languages. They understand cultural variations in how roles are described and can evaluate candidates consistently regardless of application language. This capability is essential for organisations recruiting across multiple European markets.

Why is human oversight still necessary with automated matching?

Algorithms excel at high-volume screening but lack contextual judgement about team dynamics, growth potential, and nuanced fit considerations. Human oversight ensures recommendations align with organisational values, catches algorithmic errors, and maintains accountability for hiring decisions. The most effective approach combines algorithmic efficiency with human expertise.

How might regulations affect the use of matching algorithms in recruitment?

Emerging UK and EU frameworks increasingly scrutinise automated hiring tools, requiring transparency, fairness monitoring, and human oversight. Organisations must ensure compliance with data protection rules and non-discrimination requirements. Proactive fairness monitoring and clear documentation help meet these obligations whilst benefiting from algorithmic efficiency.